ByT5: Towards a Token-Free Future with Pre-trained Byte-to-Byte Models

Abstract

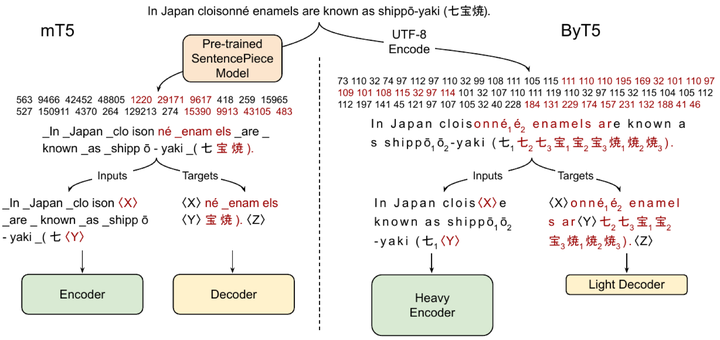

ByT5 studies byte-level sequence modeling at scale and demonstrates strong multilingual performance without tokenization.

Type

Publication

Transactions of the Association for Computational Linguistics