The Power of Scale for Parameter-Efficient Prompt Tuning

Abstract

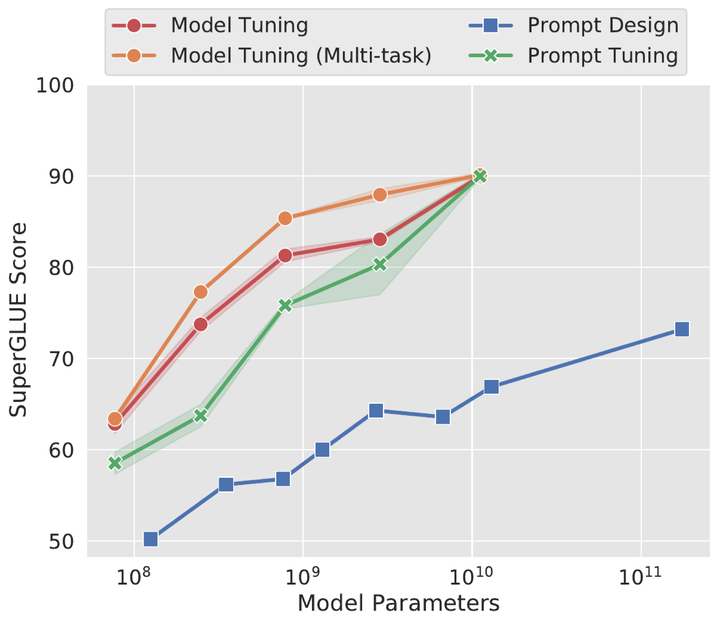

This work shows how prompt tuning quality improves with model scale, enabling parameter-efficient adaptation of large language models.

Type

Publication

Proceedings of the Conference on Empirical Methods in Natural Language Processing